From Imitation to Understanding

The Emerging Ability of Robots to Learn Directly from Human Behavior

The field of robotics has faced a persistent bottleneck: data scarcity. Unlike Large Language Models (LLMs) that feast on the entirety of the internet, robotic models have starved.

Robots operate in the physical world, where gathering high-quality training data is slow, expensive, and fraught with hardware limitations. We have long looked at the vast repositories of human video data, from YouTube tutorials to first-person demonstrations, as a potential goldmine. However, the “domain gap” between a human hand and a robotic gripper has historically rendered this data largely unusable without complex, brittle translation layers.

New research into Vision-Language-Action (VLA) models, specifically regarding the π0 model from Physical Intelligence, suggests we are crossing a critical threshold. The findings indicate that we are moving away from the era of bespoke, hand-coded robotic behavior and into an era of emergent capabilities. For technology executives, this signals a shift in investment strategy: the value proposition of robotics is no longer just in hardware sophistication, but in the scale of the foundation models driving them.

The Emergence of Cross-Embodiment Transfer

In AI, emergence refers to capabilities that are not explicitly programmed but appear spontaneously once a model reaches a certain scale of compute and data. The study reveals that when a VLA model is pre-trained on a sufficiently large and diverse dataset of robotic actions, it spontaneously develops the ability to learn from human videos.

This is a significant development. Historically, training a robot to “tidy a dresser” using videos of humans doing the same task required complex bridging techniques. Engineers had to mathematically map the kinematics of a human arm to a robot arm, often using generative intermediate steps or explicit domain adaptation. The $\pi_0$ experiments show that if the base model is large enough, these manual bridges become obsolete. The model essentially figures out the mapping on its own.

In practical terms, the researchers found that simply adding human video data to the fine-tuning process of a large-scale pre-trained model improved performance by approximately 2x across generalization tasks. The model, having seen enough diverse robotic data, began to “understand” that a human hand picking up an egg is semantically and functionally equivalent to a robotic gripper doing the same, despite the visual and physical differences.

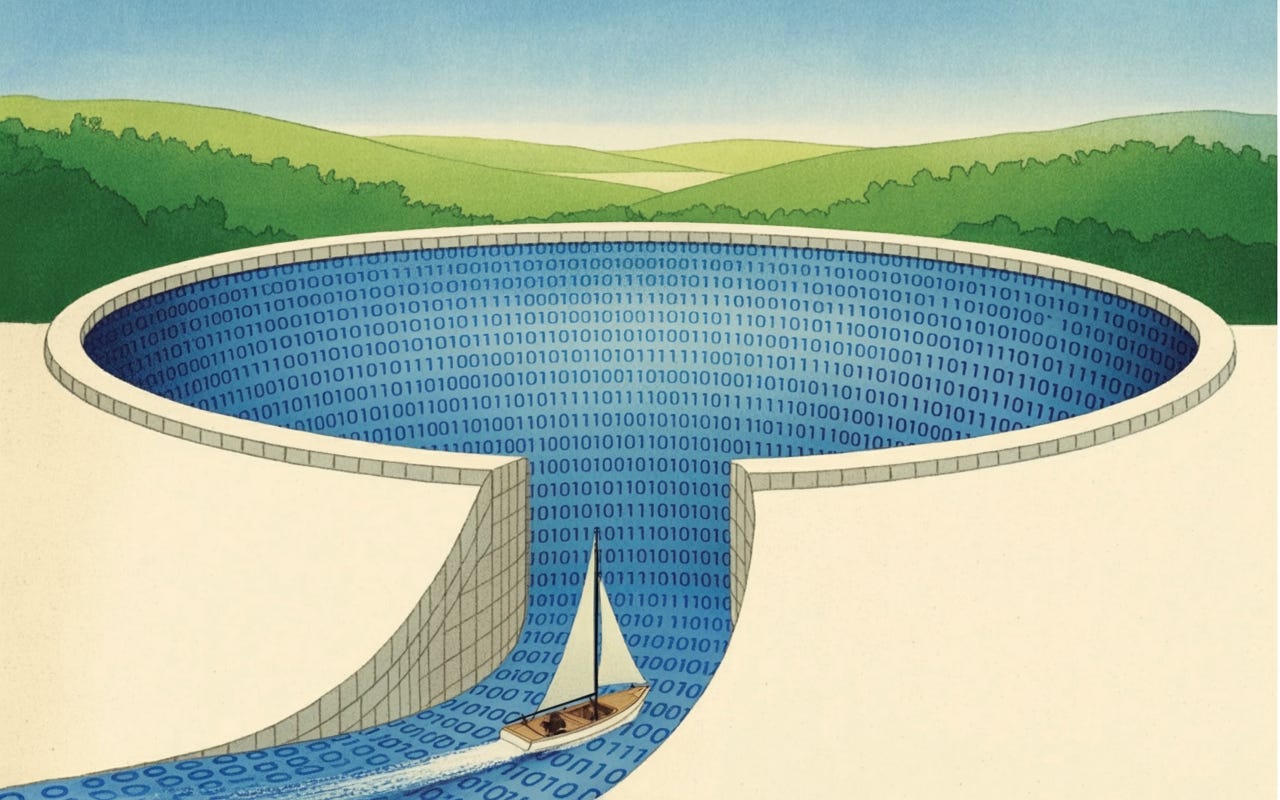

The Data Moat is Changing

For executive leadership, this shifts the definition of a competitive “data moat.” Previously, a robotics company’s advantage lay in its proprietary, clean, robot-specific telemetry. While this remains valuable, the ability to ingest distinct human data sources offers a new vector for scale.

The research highlights a “log-linear” relationship: the effectiveness of human data transfer is directly correlated to the scale of the robot data seen during pre-training. A small model trained on limited robot data gains almost nothing from watching human videos; it cannot bridge the gap. However, a large model trained on diverse robot data gains massive proficiency from the same human videos.

This implies that the “rich get richer.” Organizations that can afford the upfront cost of training massive foundational VLA models will unlock a data source (human video) that is effectively infinite and free, while smaller competitors will remain stuck collecting expensive robot-specific demonstrations.

Technical Simplicity as a Strategic Asset

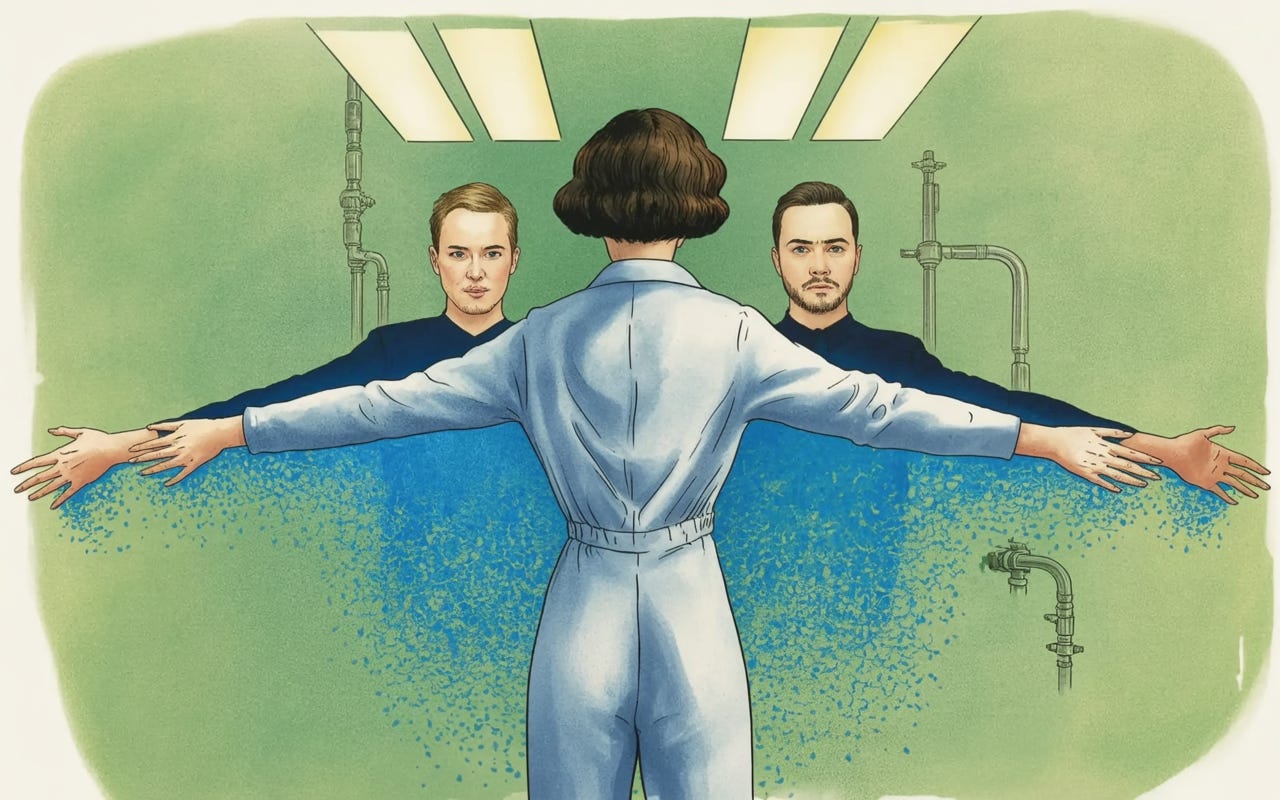

Perhaps the most compelling aspect of these findings for a CTO is the simplification of the technology stack. The researchers employed a “naive” co-fine-tuning method. They did not use complex generative masking or elaborate domain adaptation algorithms. They simply treated human hands as just another type of “robot embodiment.”

The visualization of the model’s latent space (the internal mathematical representation of the world) confirms why this works. t-SNE projections show that as pre-training scales, the clusters representing “human data” and “robot data” begin to overlap significantly. The model aligns these concepts naturally. This allows for a leaner engineering pipeline. We can reduce technical debt by stripping away the complex middleware previously needed to translate human actions for robots, relying instead on the raw capacity of the foundation model.

The Path to Learning Robots

For technology leaders, the takeaway is clear: we must stop viewing human data and robot data as incompatible silos. The barrier between the two is dissolving, provided we invest in the scale required to dissolve it. The future of general-purpose robotics lies not in programming every specific motion but in building models large enough to watch us, understand us, and eventually, work alongside us. The capital expenditure required for large-scale pre-training is high, but the return—a robot that can learn from the collective visual history of humanity—is incalculable.