Beyond Brute Force Retraining

How Precision Model Editing Can Redefine the Unit Economics of Machine Learning Operations

In the current AI arms race, the brute force era is reaching a point of diminishing returns. The predominant strategy of continuously retraining models on the most recent data is a labor-intensive, capital-hemorrhaging process. It demands constant relabeling and vast computational resources that scale poorly. To reach the next frontier of utility, we must move beyond blunt retraining and toward systems capable of generalizing inherently or adapting rapidly to novel circumstances. The future belongs to the precision update.

The End of Computational Waste

When a Large Language Model (LLM) harbors an outdated fact (for instance, the name of a newly elected prime minister) the industry standard has often been to initiate a fine-tuning run. However, retraining billions of parameters to fix a single data point is more than just computationally wasteful; it is risky. Massive updates often suffer from “catastrophic forgetting,” where the model loses its proficiency in unrelated tasks while trying to learn a new one.

Enter model editing. This methodology allows practitioners to make targeted adjustments without the prohibitive cost of fine-tuning an entire neural network. By utilizing meta-learning techniques, we can train a specialized, smaller “model editor” on a curated dataset of desired edits. This editor acts like a surgical tool, modifying the network’s weights via targeted mathematical updates. Alternatively, it can operate through a non-parametric approach, maintaining an external memory bank of specific edits that bypasses the need to touch the foundational weights at all.

The Hybrid Architecture: Memory Banks and Distillation

A sophisticated model doesn’t just need to “know” things; it needs to know when it doesn’t know things. By maintaining an external memory bank, a classification algorithm can seamlessly determine if a new input falls within the scope of an existing update.

The Workflow: If an input matches a recent edit, a specialized secondary model processes it.

The Default: If no edit exists, the original foundational model takes the lead.

The Synthesis: As this memory bank grows, it can periodically be distilled back into the foundational model during scheduled maintenance, ensuring the system remains lean and efficient.

For founders, this architecture offers a massive operational advantage: the ability to ship “hotfixes” for AI hallucinations or factual errors in seconds rather than weeks. For investors, this represents a path to lower OpEx and higher reliability, which are the two main ingredients for enterprise-grade AI.

Toward Autonomous Knowledge Ingestion

The natural evolution of memory-based efficiency is the complete automation of the update cycle. We are moving toward self-updating models that dynamically ingest information. These systems can actively monitor contemporary news or real-time data feeds, detect contradictions within their existing knowledge base, and automatically generate the necessary edits to their own parameters.

Imagine a financial model that doesn’t wait for a data scientist to tell it that interest rates have changed. It “reads” the Federal Reserve’s announcement, identifies the conflict with its current predictive logic, and performs a localized weight update to align with reality. This level of autonomy transforms AI from a static snapshot of the past into a living, breathing participant in the present.

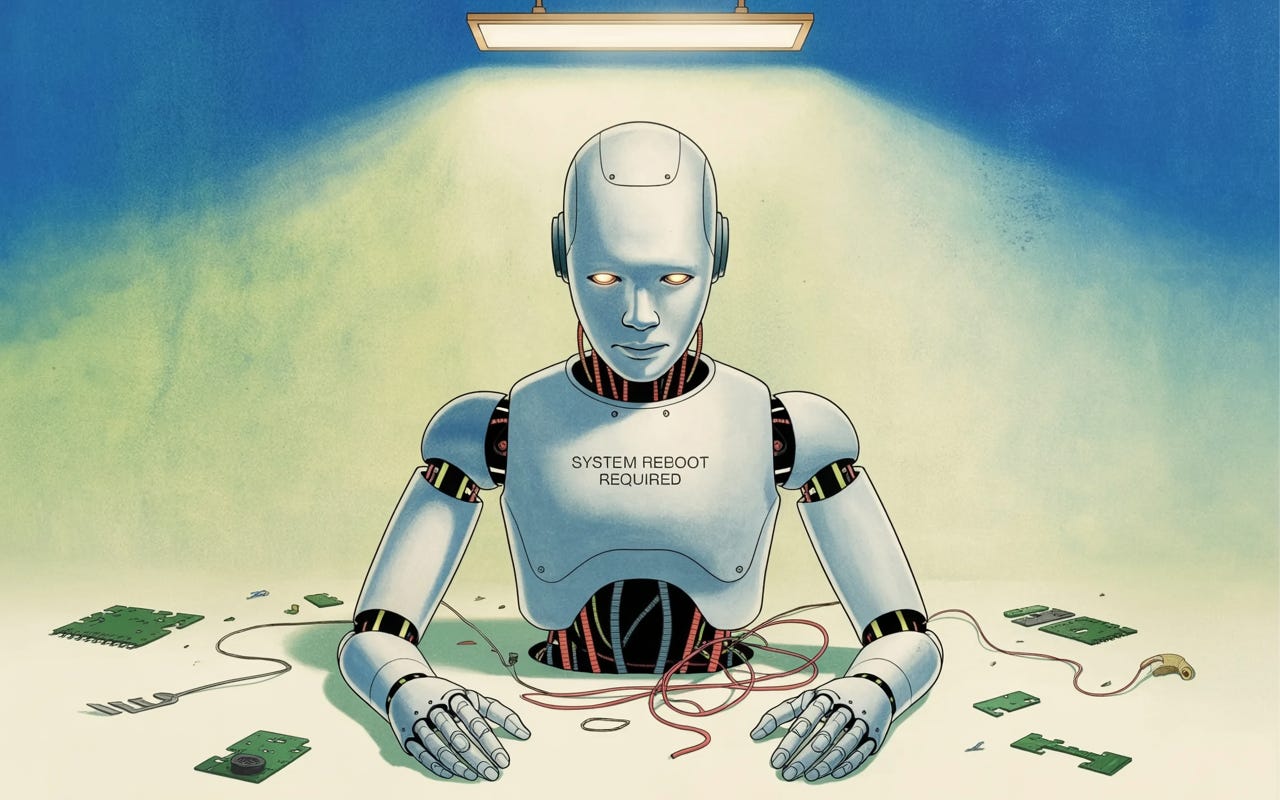

The Physical Frontier: Trial, Error, and Catastrophe

While language models thrive in digital sandboxes, physical operational systems, such as autonomous robotics and drones, offer a unique, high-stakes advantage. These agents interact with the physical world and make sequential decisions. In this arena, a failure is not a terminal error; it is a data point.

If a physical agent fails at a task, it can leverage its environment to autonomously retry the action using a slightly modified strategy on the fly. This in-situ adaptation is the holy grail of robotics.

However, this capacity for trial and error introduces a severe complication: the unknown state.

When a system fails catastrophically—perhaps an autonomous delivery drone encounters an unmapped operational hurdle that knocks it off its flight path—it enters an entirely unknown state. In these moments, the model is no longer just “editing” a fact; it is navigating a reality for which it has no prior. Bridging the gap between targeted digital edits and robust physical recovery is the next great challenge for the industry.

The transition from “retrain-everything” to “edit-precisely” represents a fundamental shift in AI unit economics. It reduces the cost of accuracy, mitigates the risks of catastrophic forgetting, and paves the way for truly autonomous agents. The companies that will dominate the next five years are not necessarily those with the largest clusters of GPUs, as advantages can accrue to those that can most efficiently manage the lifecycle of knowledge. As we move toward systems that can fix themselves in real-time, the value will migrate toward the architectures that handle these updates with the most surgical precision.