The Compiler for Human Intent

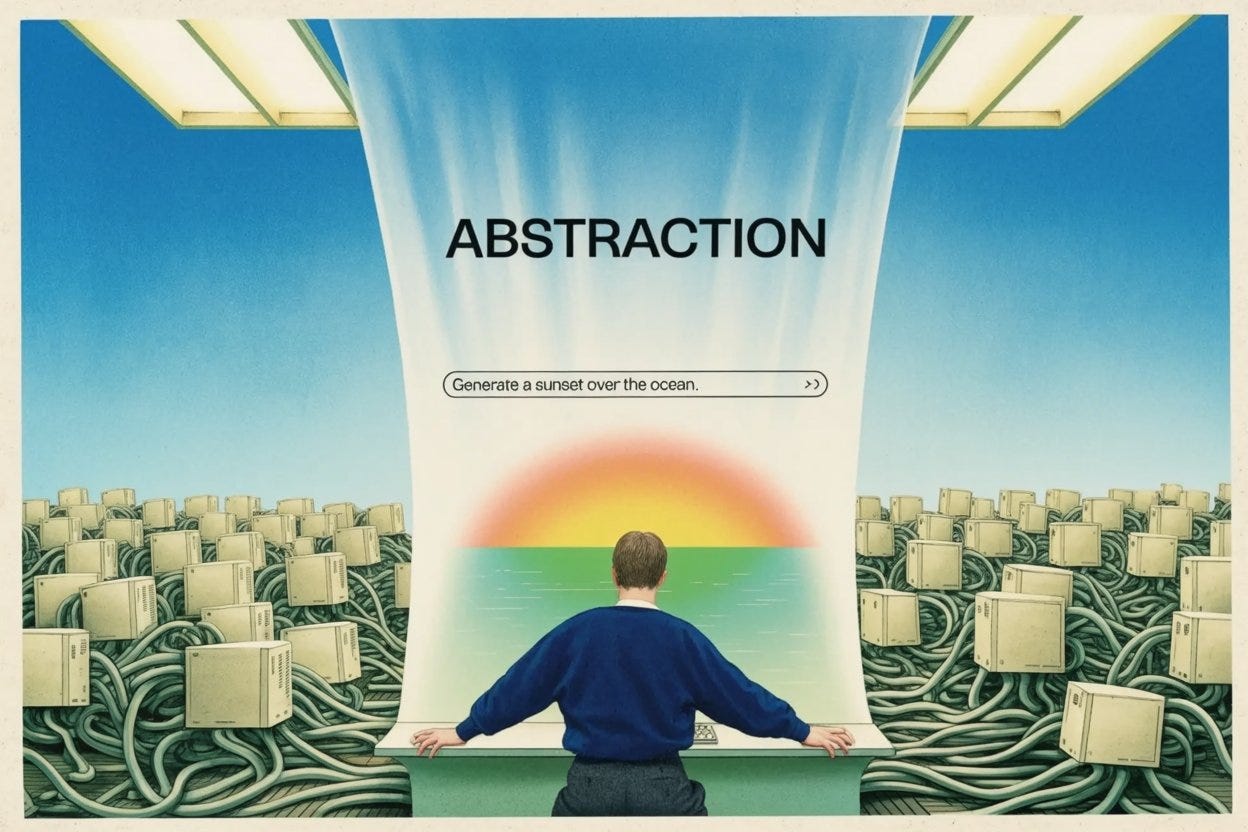

Agentic engineering and the next abstraction layer in the history of computing.

If you wanted to write software in the winter of 1946, you did not sit down at a keyboard. You walked inside the computer.

The machine was called the ENIAC. It was housed at the University of Pennsylvania, and it was not a sleek metal box on a desk. It was an industrial behemoth. It weighed thirty tons and filled an entire room with eighteen thousand vacuum tubes. When it ran, the room reached 120 degrees, and the machine needed its own dedicated cooling system to keep from cooking itself.

Rewiring the Room

But the most striking thing about the ENIAC was not its size. It was how you programmed it. A team of mathematicians, six women selected from a larger group of human “computers” doing ballistics math by hand, was tasked with making the machine calculate weapons simulations. Because the hardware was still classified, they were initially denied security clearance to even see the machine. They had to learn it from blueprints. Then, when they were finally allowed in the room, they had to literally rewire it. They carried thick black cables. They plugged them into switchboards. They physically routed electrical currents from one panel to another. They did not type commands. They built circuits. To run a different math problem, you had to physically rebuild the computer.

It was grueling. It was slow. And it was fundamentally limiting.

A few years later, a mathematician named Grace Hopper had an idea. Hopper was a Navy reservist who, in civilian life, was working as a senior mathematician on the UNIVAC I — the commercial successor to the ENIAC, built by some of the same engineers. She was tired of dealing with the microscopic, numerical language of the machine, and she proposed something the field considered absurd: a program that would translate higher-level instructions into the zeroes and ones the machine required automatically.

The conventional wisdom of the 1950s was swift and brutal. Her colleagues told her it wouldn’t work. Hopper later put it bluntly: “I had a running compiler and nobody would touch it. They told me computers could only do arithmetic.”

The Birth of Abstraction

Hopper built it anyway. She called it a compiler. She finished the first version, the A-0, in 1952. And in doing so, she did not just invent a piece of software, she introduced computing to a principle that would dictate the next seventy years of its history. She introduced a layer of abstraction.

The idea behind abstraction is simple. Think about how you drive a car. You do not need to understand the stoichiometric ratio of fuel to oxygen in the combustion chamber. You do not need to understand the hydraulic fluid dynamics of your braking system. You press the right pedal to go and the left pedal to stop. The pedals are an abstraction. They hide the mechanical complexity of the engine behind a simple, intuitive interface. You sacrifice granular control over the engine in exchange for the ability to actually get to the grocery store.

The history of software is entirely driven by abstractions stacked on top of each other.

First, we abstracted the wires. We replaced physical cables with punch cards. Then we abstracted the punch cards with Assembly language, which used short cryptic words instead of numbers. Then we abstracted Assembly with high-level languages like C and Python, where mathematical logic vaguely resembled human thought. Then we abstracted the text itself: researchers at Xerox PARC pioneered the graphical user interface in the 1970s, and Apple popularized it through the Lisa and Macintosh in the 1980s, hiding the green-on-black command line behind cartoon pictures of folders and trash cans.

Every New Layer Creates Panic

Every single time a new layer was added, the old guard panicked.

When high-level programming languages emerged, traditionalists warned that programmers were losing touch with the hardware. When the GUI arrived, purists scoffed that clicking on a little picture of a floppy disk was a toy, not real computing. If you could not see the code, they said, you were no longer in control.

And they were right. We did lose control. We lost the ability to manipulate individual vacuum tubes. We lost the ability to dictate exactly where every byte of memory was stored. But we traded that microscopic control for something more important: scale. By not having to worry about the wires, we had the cognitive bandwidth to invent the internet, the smartphone, and the global digital economy.

Which brings us to the present day.

The Rise of Agentic Engineering

Spend any time in tech circles right now and you will hear one phrase, spoken with a mixture of excitement and dread: agentic engineering.

For the past few years, AI has largely been a conversational tool. You type a question into a chatbot and it types an answer back — a powerful calculator for words. You give the agent a goal — “build a website for my new shoe company, research my top three competitors, price the shoes accordingly, and deploy it” — and it goes to work. It breaks the goal into smaller tasks. It opens a browser. It reads competitor sites. It writes the code. It tests the code. If it hits a bug, it reads the error message, rewrites the code, and tries again. It loops through problems until the goal is done.

People are terrified of this.

We watch an AI agent navigating the internet on its own, making decisions, and correcting its own mistakes, and we feel a deep, primal anxiety. We think we’ve crossed some biological threshold. We think we’ve accidentally given birth to a new species of autonomous worker. We look at a machine making its own decisions and we assume we’ve lost control.

It turns out that isn’t true at all.

Agentic AI as Architecture, Not Alien Intelligence

Agentic AI is not a biological shift. It is an architectural one. When we look closely at what these systems are actually doing, the illusion of an alien intelligence fades. Agentic engineering is precisely what Grace Hopper was doing in 1952. It is the newest, highest layer of abstraction.

When you say “research my competitors,” you are not speaking to a conscious entity. You are interacting with a compiler. But instead of compiling English words into zeroes and ones, this compiler translates human intent into software execution.

When you double-click the icon for your web browser, you do not think about the millions of microscopic logic gates flipping open and closed inside your silicon. You just want to read the news. The operating system translates your double-click into a cascade of computational labor you never see.

When you ask an AI agent to build a website, the same process is occurring, just one floor higher in the building. The agent takes your intent. It translates it into a series of smaller prompts. Those prompts trigger API calls. The API calls trigger Python scripts. The Python scripts trigger C++ libraries. The libraries trigger Assembly instructions. And at the bottom of that unimaginably deep well, microscopic electrical currents flow through silicon — exactly as they did through the black cables of the ENIAC.

A Thicker Curtain

We are not building a replacement for humans. We are building a thicker curtain.

The anxiety we feel about AI agents is the same anxiety the switchboard operators felt about punch cards and the command-line coders felt about the mouse and keyboard. We are standing at the edge of a new layer of abstraction, looking down, and feeling dizzy. We are mourning the loss of the granular control we used to have over our daily, repetitive digital tasks.

But the history of computing tells us this fear, while understandable, is misplaced.

If Grace Hopper were here to witness the rise of agentic engineering, she would not be afraid. She would smile. She would look past the autonomous loops, the reasoning models, and the self-correcting code, and she would recognize it for exactly what it is. Not an alien mind. A tool. The continuation of the dream she had seventy years ago.

When we stop worrying about how the machine works, we finally have the time to figure out what we actually want it to do.