The Last Mile of Robotics

How the probabilistic genius of the Large Language Model struggles to negotiate the friction, gravity, and entropy of the real world.

For centuries, the dream of the artificial servant—the Golem, the Automaton, the silicon butler—has been bifurcated by a cruel irony known as Moravec’s Paradox. It is relatively easy, it turns out, to make computers exhibit adult-level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility. We spent decades fearing a Terminator that could outsmart us, only to build machines that could defeat a grandmaster at chess but could not reliably turn a doorknob.

The arrival of Large Language Models (LLMs) has given the machine a voice. These probabilistic engines, trained on the accumulated scrapings of the internet, have mastered the flow of symbols. They can write sonnets, debug code, and, crucially, reason through high-level tasks. Ask a modern AI to “prepare a dinner,” and it will dutifully hallucinate a plan: boil the water, chop the carrots, sear the steak. The logic is sound; the syntax is perfect. The ghost in the machine has learned to speak.

But the machine itself—the gear, the servo, the gripper—remains stubbornly mute.

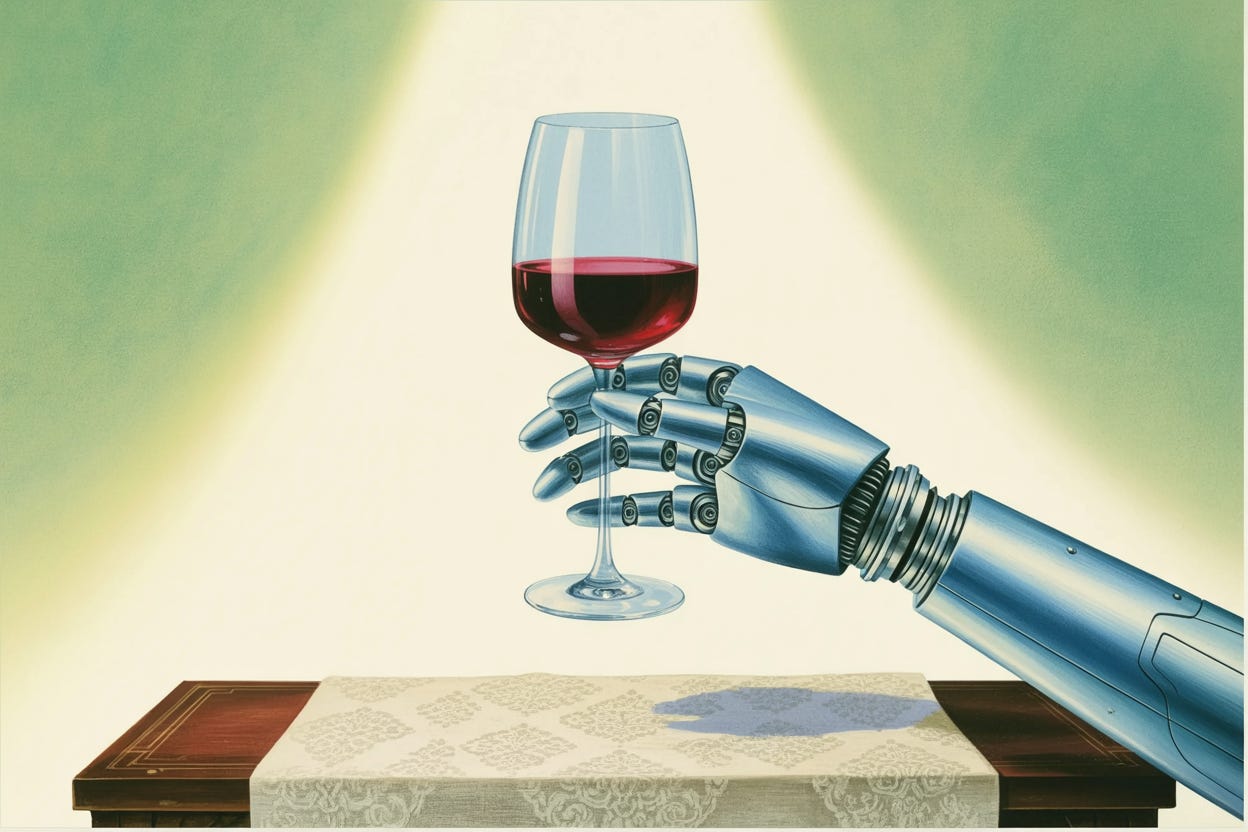

This is the central struggle of modern robotics: the translation of a semantic dream into a kinematic reality. The LLM exists in a universe of pure symbols, a friction-free environment where “grasp the glass” is just a token, a statistical probability following “reach for the table.” But to a physical robot, “grasp the glass” is a nightmare of physics. It is a calculus of millisecond-scale motion commands, joint velocities, and impedance control. It is the negotiation of gravity, friction, and the unforgiving solidity of matter.

The tragedy lies in the translation. The AI might understand that a wine glass is “fragile”—a semantic concept derived from reading a trillion words of human literature—but it does not understand the amount of newton-meters required to hold it without crushing it. The mind is a poet; the body is a clumsy infant.

This disconnect is exacerbated by a starvation of data. The LLMs have feasted on the textual output of humanity—trillions of tokens ingesting the sum of our written history. Robotics, by contrast, is data-poor. A dataset of robot interactions might contain a mere hundred thousand examples. The robot has no childhood; it has never spent years knocking over blocks to learn the secret language of mass and momentum. It tries to map the intricate, compliant geometry of the human hand—twenty-seven degrees of freedom, wrapped in soft, sensing skin—onto a rigid, two-finger industrial gripper. It is like trying to play a Rachmaninoff concerto with a pair of pliers.

The struggle to bridge this gap is not merely a software problem; it is a confrontation with the hardware legacy of the industrial age. The factories of the twentieth century were populated by machines designed for stiffness and repetition. An automobile-building robot is a marvel of determinism, repeating the same trajectory within a fraction of a millimeter thousands of times a day. It is precise, expensive, and dangerous—a blind giant that will crush a human bone as easily as a rivet.

To bring robots into our homes and hospitals, we need the opposite: softness, compliance, and adaptability. We need machines that can fail safely. Yet, the current reality of the robotics lab is a cycle of expensive fragility. Researchers spend fortunes—seventy thousand dollars or more—on robot arms that require constant tinkering, fragile prima donnas of the laboratory that break under the stress of the chaotic real world.

It is a humble ambition: a machine that does not try to be a person but tries to be a helpful object.

Faced with these headwinds, the field is undergoing a pragmatic contraction. The vision of the universal humanoid—the shiny, golden droid of science fiction—is receding in favor of the specialized tool. Consider the hospital corridor. Companies like Diligent Robotics have realized that the problem is not to replace the nurse, but to replace the walking. By building a robot that simply shuttles supplies, pushing elevators and opening doors, they carve out a slice of utility that bypasses the hardest problems of manipulation. It is a humble ambition: a machine that does not try to be a person but tries to be a helpful object.

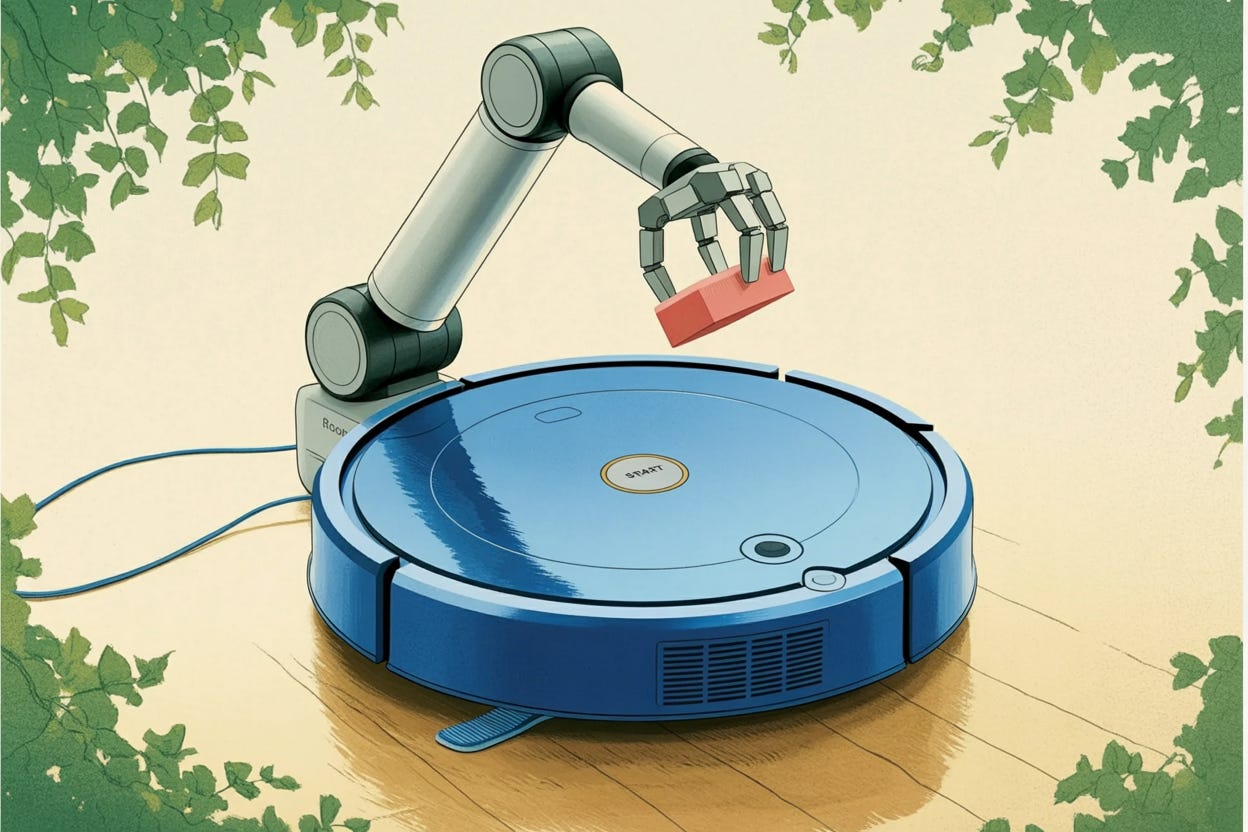

This philosophy is birthing a new morphology: the mobile manipulator. Picture a Roomba, that lowly autonomous puck, gifted with a single, dexterous arm. It is a “tidy-bot,” a creature of low center of gravity and modest reach, designed to scuttle through the living room rescuing parents from the hazards of scattered Lego bricks. By keeping the design simple and the cost low—perhaps eventually comparable to a luxury appliance rather than a luxury car—engineers hope to democratize the data collection process, allowing thousands of units to learn from the physical world simultaneously.

Yet, as we stand on the precipice of this integration, the “last mile” problem looms large. We are approaching a point of ninety percent maturity, a deceptive plateau where the demos look flawless, but the edge cases—the tangled cable, the unexpected stair, the confusing shadow—remain unsolved. The convergence of the dreaming mind of the LLM with the grinding reality of the robot body is not a singularity, but a slow, difficult weld. We are teaching the ghost how to inhabit the shell, attempting to ground the soaring statistics of language in the stubborn, unyielding truth of the physical world.