The Mother of All Demos

How Douglas Engelbart's vision of augmented intellect escaped the "paper simulator" trap

In December 1968, inside a dimly lit auditorium in San Francisco, an engineer named Douglas Engelbart sat down at a custom-built console. He was presenting to the Fall Joint Computer Conference. On the desk in front of him was a strange array of hardware. He had a standard keyboard, a specialized five-key chorded handset, and a small, wooden block with wheels that he called a “mouse”.

Engelbart began to type, and on a massive screen behind him, text appeared. But he did not just type. He linked. He expanded and collapsed hierarchical outlines. He brought up a live video feed of a colleague located thirty miles away, and the two of them edited the exact same document simultaneously.

This event is now universally revered in computer science as “The Mother of All Demos”. Engelbart introduced the computer mouse, the graphical user interface, and real-time collaborative text editing all in a single afternoon.

But to focus entirely on the hardware is to miss the point. Engelbart was not trying to build a better typewriter. He was trying to solve a fundamental mismatch between human cognition and the tools we use to record it. Engelbart believed his oN-Line System (NLS) was a way of “augmenting human intellect”. His vision was simple, yet profound. He believed a document should not be a dead record. It should be a shared, interactive, and stateful space.

The Paper Simulator

For decades, the software industry largely ignored the most important part of Engelbart’s vision. We adopted the mouse and the screen, but we constrained them within what early computing pioneers called the “paper simulator” model.

Think about Microsoft Word or the Portable Document Format (PDF). These applications were strictly engineered to replicate the physical constraints of printed media within a digital space. We create static artifacts. They are intended solely for human consumption. We accept this as the natural order of computing. We write. We print. We file.

But there is a problem with that theory. Parallel to this mainstream trajectory, a radically different lineage of software design has continuously evolved.

This alternative lineage operates on a concept called the Active Document. The Active Document is not a static record. It is a highly programmable, interactive, and collaborative computational medium where prose, data, and executable code seamlessly intertwine.

We see flashes of this protagonist throughout computing history. In 1984, the algorithm pioneer Donald Knuth introduced Literate Programming. Knuth argued that a programmer should act as an essayist. The goal was to weave together a narrative explaining the logic of a program to other humans, with the executable machine code embedded right inside the prose.

In 1987, Apple released HyperCard. It blurred the lines between a hypermedia authoring tool and a graphical application builder. It featured a built-in language called HyperTalk that allowed non-programmers to attach executable logic to simple user interface elements. Domain experts who were not formally trained in computer science could suddenly build bespoke tools. The groundbreaking video game Myst was originally built and storyboarded entirely within a HyperCard environment.

These were brilliant, visionary systems. They proved that documents could function as full-fledged applications. But they were fundamentally constrained by their local, box-centric architecture. And so, when the World Wide Web arrived, the lightweight, static HTML document won out. The Active Document was pushed to the fringes.

The Cloud Trap

If we fast forward to the 2010s, it looks like the Active Document finally achieved mainstream victory. This was the era of the commercial “docs-as-apps” movement. Platforms like Notion and Coda introduced highly aesthetic, block-based architectures. Paragraphs of text and nested relational databases could exist as modular, drag-and-drop entities. A standard document could be transformed into a functional CRM or an inventory tracker.

It felt like the democratization of software. It felt like Engelbart’s vision had finally arrived in the enterprise.

It turns out, that isn’t true at all.

These massive cloud monoliths introduced severe, hidden costs. They trapped organizational data inside opaque, proprietary cloud databases. Because every single keystroke must be synchronized with a remote server, these complex documents suffer from noticeable latency. An engineering team cannot simply clone a Notion database to a local machine or audit it via standard version control pipelines.

Most critically, these platforms are fundamentally incompatible with the newest, most important actors in software engineering. The industry is rapidly shifting toward agentic workflows. Autonomous AI coding agents are now designed to read, navigate, and edit codebases. But these AI agents are isolated from the “docs-as-apps” world. They cannot click through a proprietary cloud user interface.

AI agents excel at reading, writing, and manipulating standard plain-text files on a local file system.

The Return to Plain Text

To solve the crisis of the modern workspace, engineers realized they had to go backward. They had to abandon the proprietary cloud database and return to the simplest medium in computing. They returned to plain text.

Specifically, they turned to Markdown. Created in 2004, Markdown is a lightweight, human-readable syntax that became the undisputed lingua franca of the internet. It is plain text. It is immune to vendor lock-in. But Markdown is static. To make it alive, developers created MDX. MDX allows developers to seamlessly embed complex, interactive React components directly within a standard Markdown file.

This synthesis is the foundation of a new generation of professional workspaces, archetypally represented by systems like Moment.dev.

Moment.dev does something highly counterintuitive. It completely rejects the proprietary remote database. Every single document, dashboard, and widget built within the platform is stored as a plain-old Markdown file directly on the user’s local hard drive.

This relies on the principles of Local-First Software. By keeping the files on the local disk, engineers retain absolute version history and total data sovereignty. To handle collaboration without cloud servers, Moment utilizes purpose-built, high-performance distributed editing algorithms. It seamlessly manages the algorithmic merge of offline edits upon eventual network reconnection.

Furthermore, because these are just text files, they can be managed by advanced version control systems like Jujutsu (jj). Jujutsu eliminates the cognitive overhead of traditional Git by continuously treating the active working directory as an ongoing, mutable commit. It provides a robust, error-proof backend for real-time collaboration on local text.

The Cognitive Environment

Let us zoom back out. What does this highly technical architecture actually mean for the humans using it?

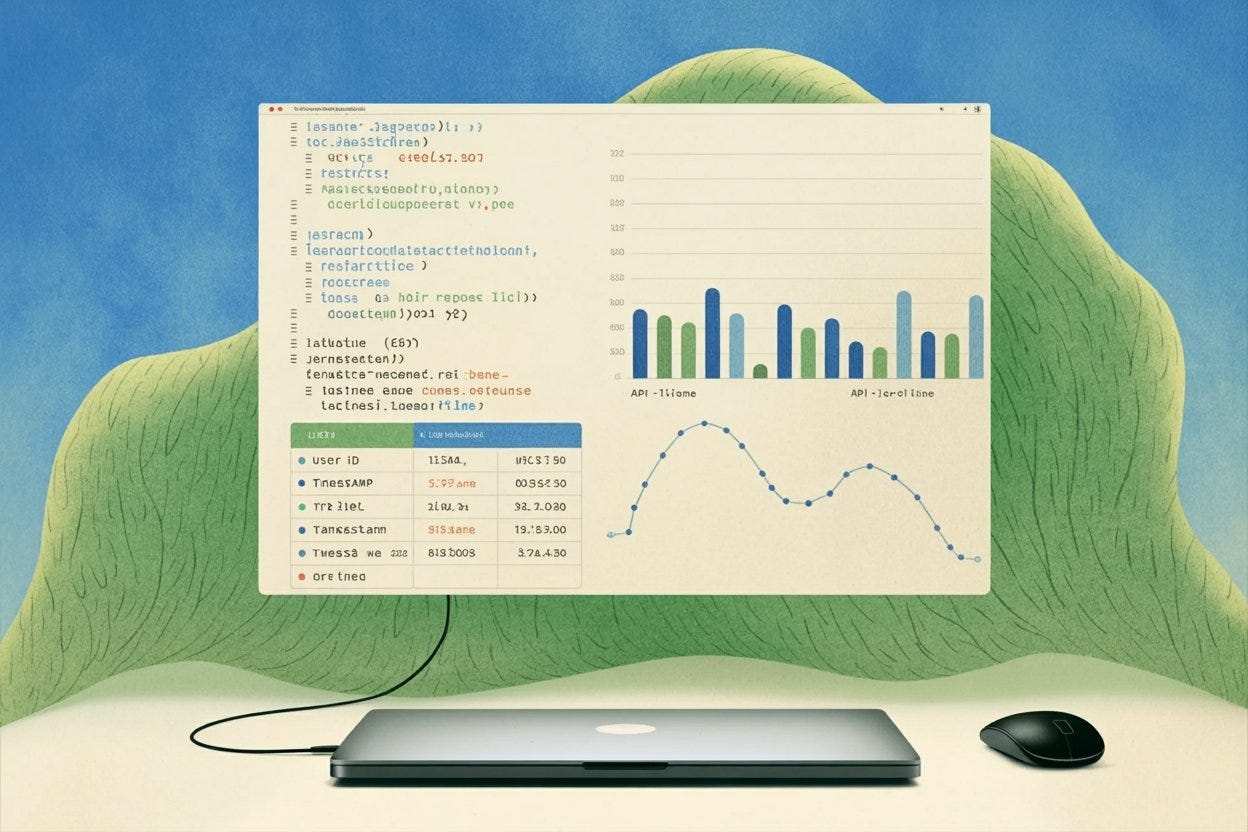

It means that infrastructure developers and platform engineers can write custom JavaScript to embed fully functional applications directly into a local document. They can build live database browsers or API metric visualizers right inside the text. They have eliminated latency. They have eliminated vendor lock-in.

But the true magic lies in interoperability. Because Moment operates entirely on local .md files, it acts as a native, frictionless habitat for Artificial Intelligence. An engineer can simply prompt a local AI agent to generate a custom monitoring dashboard. The agent independently writes the required React components directly into the local Markdown file, and the application materializes instantly.

This is the ultimate evolution of Douglas Engelbart’s 1968 vision. We are finally abandoning the simulation of physical paper. By storing highly complex applications as simple, version-controlled text files, systems like Moment have realized the promise of the Active Document. They are building interconnected, dynamic cognitive environments.