The Physical Intelligence Frontier

Building the Foundation Models for Robotics

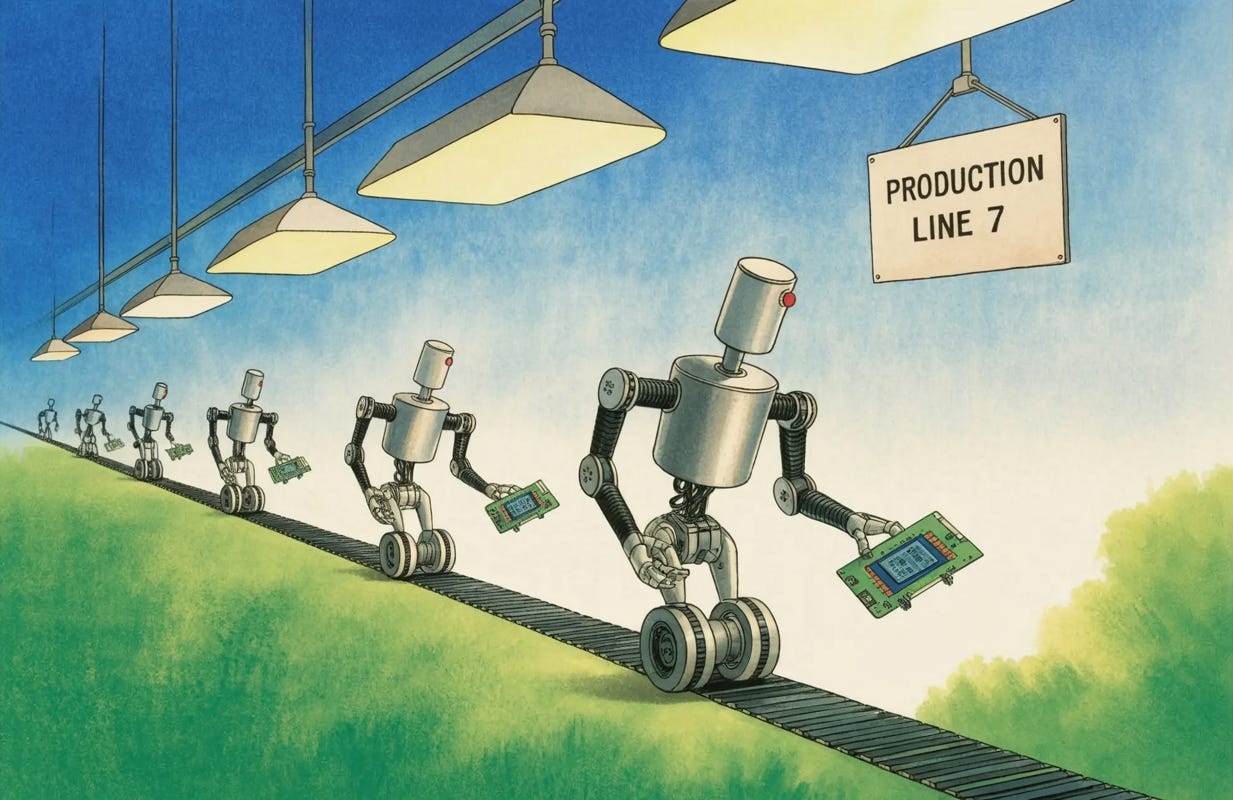

The history of robotics has long been a tale of extreme specialization: “one robot, one task.” For decades, the industry’s greatest successes have lived within the rigid, highly constrained environments of automotive assembly lines and distribution centers.

These systems are marvels of engineering, yet they are fragile; change a single lighting condition or move an object two inches to the left, and the system collapses. The holy grail has always been general-purpose AI for the physical world, but until recently, the path to achieving it was obscured by the sheer complexity of motor control and the lack of a “Wikipedia for motion.”

The current shift in the field is driven by a fundamental realization: robotics is not just a hardware problem but a data and architecture problem. Just as Large Language Models (LLMs) revolutionized our interaction with text by scaling on massive, diverse datasets, robotics is entering an era of “Generalist Models.” The objective is to move away from the deep but narrow application silos and toward a single neural network capable of controlling any robot, with any number of joints or limbs, across any scenario. This transition mirrors the evolution of the software industry, moving from custom-coded solutions to versatile foundation models that can be adapted for a multitude of downstream tasks.

The Data Bottleneck and the Reality of Real-World Interaction

The primary obstacle to this generalist future is the scarcity of diverse robotic data. Unlike language models, which benefit from the vast expanse of the internet, robots require physical interaction data to learn the nuances of contact and motor control. Current efforts are focused on teleoperation. This involves puppeteering robots through complex tasks like folding laundry, cleaning tables, or assembling boxes to create a bread and butter dataset of real-world experience. While simulated data has its place, there is no substitute for the messy, unpredictable physics of the real world, where the difference between a successful grasp and a failed mission is measured in millimeters.

To overcome this data deficit, the next generation of robotic intelligence is leveraging pre-trained Vision-Language Models (VLMs). By utilizing the rich conceptual information already present on the internet, a robot can understand tasks involving objects or people it has never physically encountered. For example, a robot trained on a foundation model can identify a specific celebrity’s image or a niche product because that visual concept exists in the model’s weights, even if it wasn’t in the robot’s specific motor-training data. This “transfer learning” is the bridge between digital intelligence and physical execution.

Architectural Innovations: Hierarchy and Reasoning

As these models scale, the focus is shifting toward more sophisticated architectures, such as Hierarchical Interactive Robotics. A common failure in traditional robotics is the “horizon” problem: a robot might excel at a half-second motor command but lose the plot during a three-minute task like making a sandwich. A hierarchical approach splits the labor: a high-level model reasons through the logical steps (e.g., “pick up the tomato,” “avoid the pickles”), while a low-level model executes the precise motor torques required for those actions. This structure allows for real-time human interaction, where a user can provide situated corrections or dietary preferences mid-task, and the robot can adjust its plan without crashing.

This evolution in reasoning is bringing robotics closer to the “Cambrian Explosion” of hardware. Once the underlying “physical intelligence” is sufficiently robust, we are likely to see a vast ecosystem of robot forms—some optimized for the kitchen, others for the laundry room, and some for heavy manufacturing. While the humanoid form factor is a popular focus due to our world being “built for humans,” the true future may lie in a diversity of cheaper, specialized platforms all powered by a universal foundation model.

The Road Ahead: Robustness and Open Ecosystems

The ultimate goal is a world where robots are as commercially viable and ubiquitous as the various appliances in our kitchens today. Achieving this requires a commitment to open-source development and cross-lab collaboration. By pooling data across different robot embodiments and sharing model checkpoints, the community is finding that a “universal” model often performs better on a specific robot than a model trained only on that specific machine. This suggests that the “bet” on generalizability is paying off; the collective intelligence of many robots is greater than the sum of its parts.

As we look toward the future, the challenge remains one of endurance and iteration. The physical world is an unforgiving environment with little tolerance for error, but the integration of memory, diverse sensing, and scaled-up data collection is rapidly closing the gap. The goal is no longer just to make a robot do one thing well, but to build a machine that can learn to do anything, anywhere.