The Soul of a Machine

Why Looking Human Doesn't Mean Thinking Human

In 1947, a man named George Devol sat in a dimly lit workshop, tinkering with a device that would eventually become the Unimate—the world’s first industrial robot. If you looked at the Unimate, it didn’t look like a person. It looked like a giant, motorized crane arm. It was designed to do one thing: pick up a piece of hot die-cast metal and drop it into a pool of cooling liquid.

It was a marvel of engineering. But it was also, in a very fundamental sense, profoundly stupid.

If you moved that piece of metal two inches to the left, the Unimate would still reach for the old spot. It would grasp at thin air, oblivious to the world around it. It lived in a world of pre-scripted actions. It followed a rigid, mathematical geometry that assumed the world was static, predictable, and perfectly aligned. For seventy years, this has been the “Grammar of Work.” If you wanted a machine to do something, you had to speak its language—a language of X, Y, Z coordinates and if-then statements.

But there is a problem. The world isn’t a grid. It’s messy. It’s rusted. It’s unpredictable. And we are finally realizing that the secret to the future of labor isn’t about writing better scripts. It’s about teaching machines how to have sense.

The Vision Gap

Consider the act of picking up a wrench. To you and me, this is a trivial task. But to a computer, a wrench doesn’t exist. There is only a grid of pixels, a chaotic soup of color values and light intensities. Traditional AI looks at a wrench and sees data.

This is where the new architecture of physical AI, driven by Vision-Language Models (VLMs), changes everything. When a VLM looks at a factory floor, it isn’t just processing images; it is interpreting context. It understands that the brown flake on a bolt is “rust.” It understands that a “wrench” is a tool for “torque.”

The machine is no longer a blind laborer following a map; it is a scout. It sees the world as we see it.

But seeing is only half the battle. The real magic happens when you move from seeing to doing—from the VLM to the VLA (Vision-Language-Action). In the old world, if you wanted a robot to pick up a new type of screw, you had to spend weeks programming the specific geometry of the grasp. In the new world? You convey your intent. You tell the robot to “pick,” and it figures out the physics on the fly.

It generalizes. It learns. It adapts.

The Grammar of Skills

In social psychology, there is a concept called tacit knowledge. It’s the kind of knowledge that is difficult to transfer to another person by writing it down or verbalizing it. Think of a master carpenter feeling the give of a cross-threaded bolt. Or a welder who knows, just by the specific hue of a spark, that the metal is beginning to fatigue.

For the last century, we assumed that as machines got smarter, they would eventually replace this human intuition. We thought the blue collar worker was the most vulnerable. But it turns out, that isn’t true at all.

We are moving from a world of pre-written scripts to a world of rapidly acquired skills.

The Machine has the Sensor: It can process a billion data points in a heartbeat. It can monitor the temperature of a furnace to the thousandth of a degree.

The Human has the Sense: We understand the “Why.” We understand the history of the metal. We understand the stakes.

In the old grammar of work, the human was the operator. You pushed the buttons. You cleared the jams. You were an extension of the machine’s script. In the new grammar, the human is the instructor.

The Desired Difficulty

When we make a machine too autonomous, we lose our grip on the process. But when we pair a VLA-enabled robot with a skilled human, we create a feedback loop. The robot handles the geometry of the grasp—the tedious, repetitive calculation of motion—while the human provides the judgment of the task.

It’s a subtle shift, but it’s a powerful one. It means that the value of a worker in 2026 isn’t their ability to follow instructions. It’s their ability to translate their tacit knowledge into the machine’s digital fluency.

The Moral of the Machine

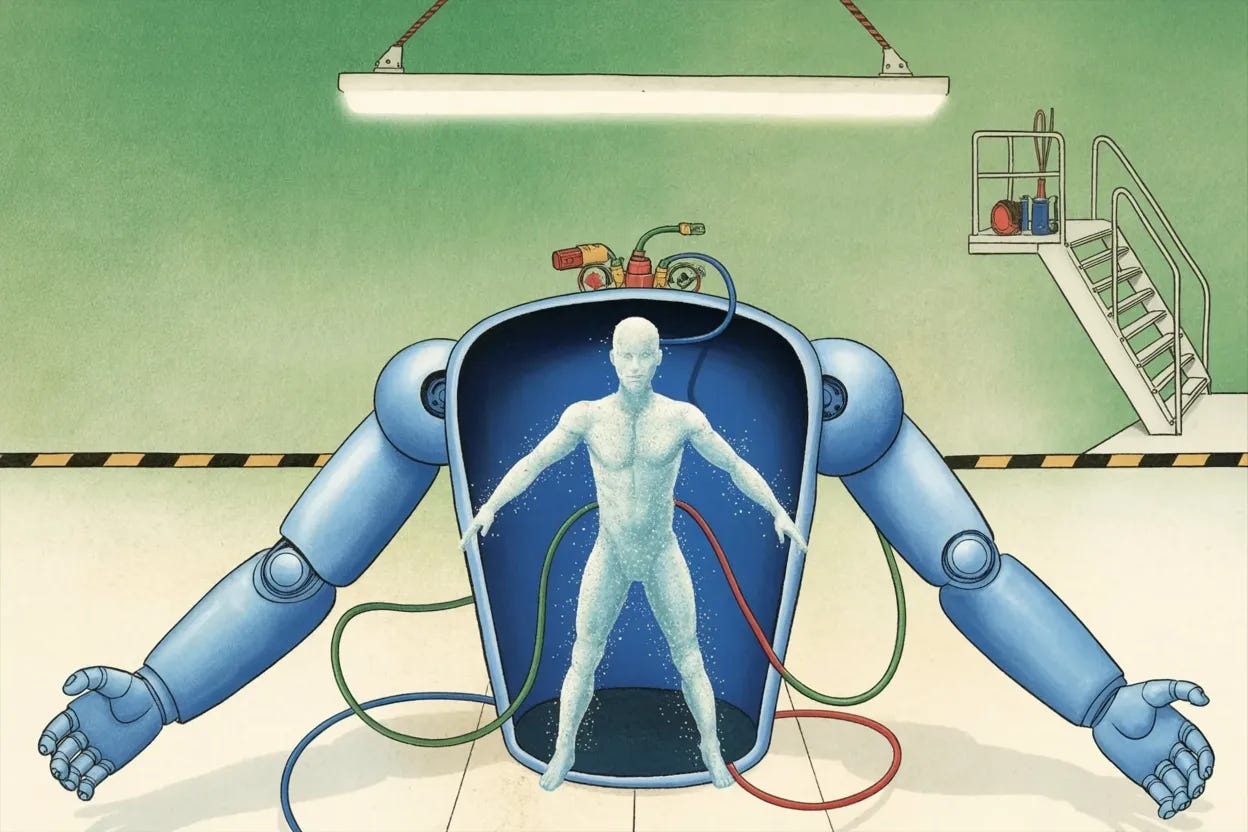

We often fear that technology will make us obsolete. We look at the robot in the warehouse and see a replacement. But if we look closer, we see something else. We see a tool that is finally learning to speak our language.

The Unimate became obsolete because it was deaf and blind to the messiness of reality. It required the world to be perfect. But the world is never perfect. By giving machines the ability to see rust and understand torque, we are making them more like us.

The future of work isn’t a factory full of humming, lonely machines. It’s a partnership. The machine provides the precision; we provide the soul. And in that marriage of sensor and sense, we might just find a way to make work more human than it has ever been before.